AI as a Thought Partner: Responsible AI in Classrooms

As AI in education becomes more and more deeply embedded, it’s crucial that educators ask themselves: how do we guide students to use AI as a thought partner, not a shortcut to answers?

AI tools can spark curiosity, deepen understanding, and support creative problem-solving, but only when used thoughtfully. Without guidance, students may misuse these tools, rely on them too heavily, or overlook the critical thinking and reflection that lead to meaningful learning.

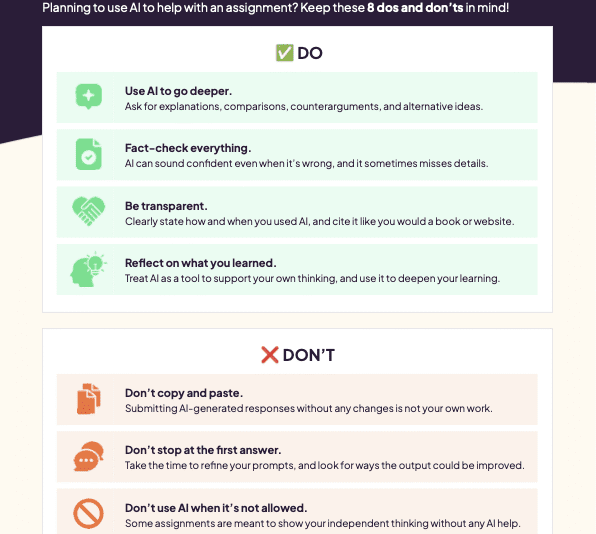

Here are eight Dos and Don’ts for students, to help them to use AI as a thought partner.

Using AI as a Thought Partner

Download the Dos and Don’ts to share with your students, or display them on your classroom wall!

DO: Use AI to go deeper.

AI becomes a powerful learning ally when it helps students stretch their thinking, challenge assumptions, and explore complex topics. Rather than closing off thought processes, it can spark questions and support inquiry-based learning. This is where responsible AI in classrooms shifts from answer-delivery to genuine intellectual growth. Students should ask AI to provide in-depth explanations, comparisons, counterarguments, and alternative ideas for them to consider.

DO: Fact-check everything.

AI can sound confident even when it’s wrong, and it sometimes misses key details. Students should treat AI tools with the same caution as any other online resource. Encourage them to verify and cross-reference facts with reliable sources, checking up on anything unclear or questionable. Teaching critical evaluation not only protects against misinformation, but also reinforces the role of AI in education as a guide for research rather than a guaranteed source of truth.

DO: Be transparent.

Students should clearly state how and when they have used AI, even citing it like they would a book or website where appropriate. Academic honesty applies just as much to digital tools as it does to traditional sources. Students should get used to acknowledging their use of AI as part of a transparent learning process. Schools can support this by developing clear citation guidelines and creating space for open conversations about what appropriate use looks like.

DO: Reflect on what you learned.

AI should be a tool for students to support and develop their own thinking, and to deepen their learning. When using AI in education, students should take time to process its responses, and think about any implications that the output had for their own learning journey. Reflection transforms AI-assisted work from passive consumption into active learning. Journals, exit tickets, and metacognitive discussions around how learning happens can help students build this habit.

DON’T: Copy and paste.

Students should be fully aware that submitting AI-generated responses without any changes is not their own work. It limits learning, risks plagiarism, and often undermines the purpose of the assignment itself. Instead of treating AI outputs as finished products, students should treat them like something to critique, build on, and personalise. Teachers can model this process to support a culture of responsible AI in classrooms, where originality and thinking still come first.

DON’T: Stop at the first answer.

Students should always refine their prompts and look for ways that the output could be improved. AI conversations should be iterative. Challenge your students to ask follow-up questions, request clarifications, and push for more depth. The first response from an AI tool is rarely the most complete or accurate, and re-prompting helps students engage more actively with the content. This aligns with critical thinking goals and supports more thoughtful use of AI in education.

DON’T: Use AI when it’s not allowed.

Some assignments are designed to show independent thinking and must be completed without AI assistance. Learning when not to use digital tools, drawing purely on your own knowledge and ideas, is just as important as learning how to use them well. Teachers should always make these boundaries clear to students when setting assignments.

DON’T: Give AI personal or private information.

Students must understand that AI tools require careful data handling. Remind them not to enter full names, addresses, phone numbers, school logins, or anything they would not share publicly. A strong digital safety mindset is critical for productive and secure use of AI in education, especially as more tools become commonplace in students’ daily lives.

At Kognity, we believe that preparing students for a future shaped by technology means helping them develop the right habits around responsible AI in classrooms. Rather than spreading fear or even trying to avoid AI altogether, schools can guide their students towards using it with intention, caution, and academic integrity, as a thought partner instead of a “cheat code”.

Blog articles Guides